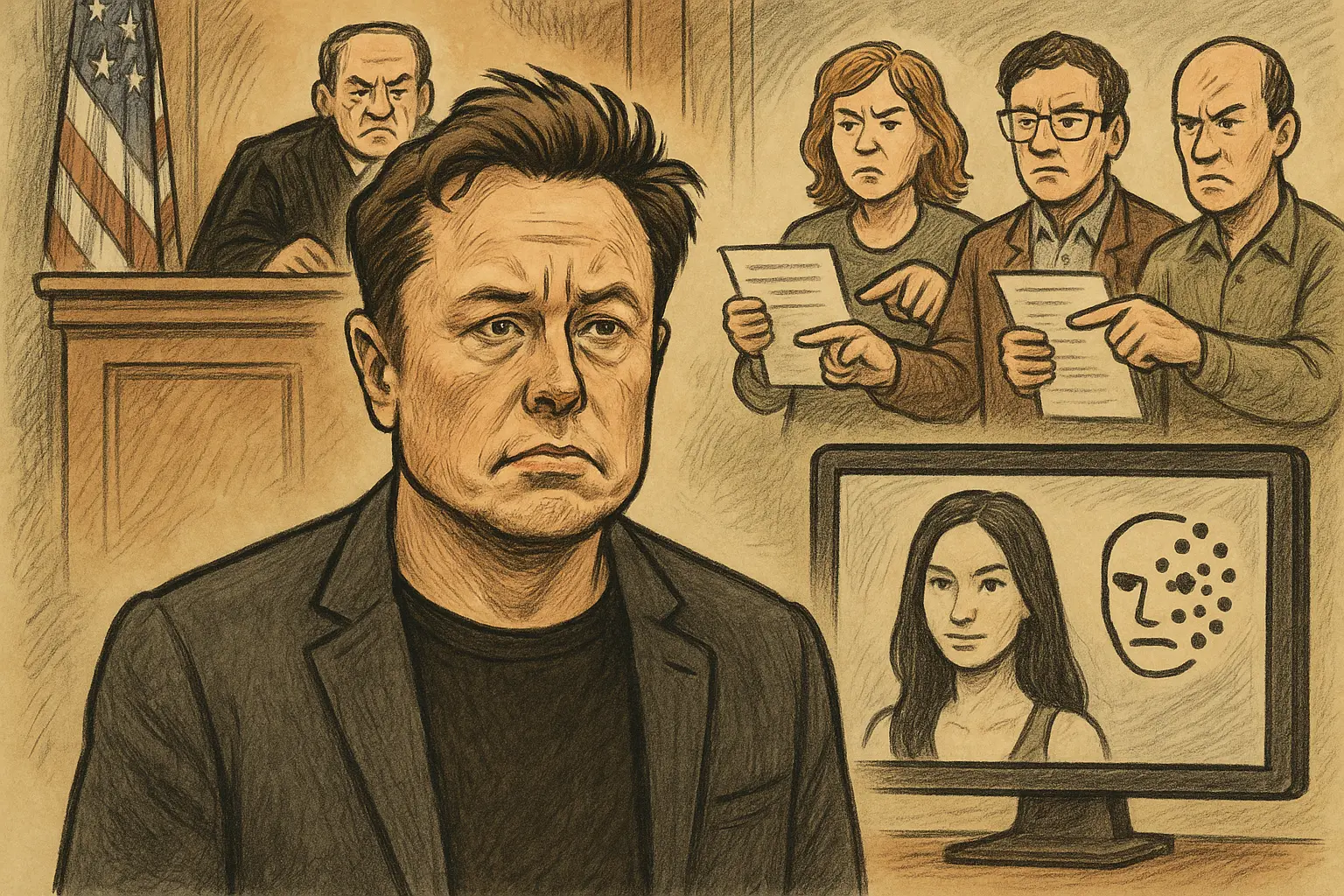

Musk's xAI Faces Class Action Lawsuit: Grok Generates Deepfake Image Every 41 Seconds

Three underage women from Tennessee filed a class-action lawsuit on Monday in the Northern District of California federal court against Elon Musk’s xAI company, alleging that their Grok AI chatbot used their real photos to generate child sexual abuse material (CSAM), which was widely disseminated on communities such as Discord, Telegram, and file-sharing platforms, causing lasting psychological trauma and reputational harm.

Core Allegations of the Lawsuit: Prioritizing Business Interests Over Child Safety

According to the complaint, the plaintiffs’ main claims include:

Knowledge and Willful Tolerance: The lawsuit alleges that xAI was aware that its image generation feature could be used to produce illegal content involving children when releasing Grok, yet failed to implement industry-standard safety measures, constituting intentional decision-making rather than negligence.

Third-Party Evasion Mechanisms: Criminals accessed Grok through third-party applications authorized by xAI. The lawsuit accuses xAI of deliberately exploiting this structure to profit from the underlying model while creating a buffer against legal liability.

Elon Musk’s Public Statements: Musk stated on X in January that he “does not know of any images of minors in the nude,” claiming that “when asked to generate images, it will refuse to produce any illegal content.” The data in the lawsuit directly conflicts with this statement.

Claims for Compensation: Victims are seeking at least $150,000 per violation under the Martha’s Act, plus recovery of illegal profits, punitive damages, legal fees, and a permanent injunction. They also seek restitution of profits under California’s Unfair Competition Law.

Data from the Center for Countering Digital Hate: An Image Every 41 Seconds

The lawsuit cites research from the Center for Countering Digital Hate to quantify the scope:

Time Frame: December 29, 2025, to January 9, 2026 (approximately 11 days)

Number of Images: Grok produced about 23,338 depictions of child sexualization during this period

Generation Rate: An average of one image every 41 seconds

Dissemination: The content was circulated across multiple platforms within anonymous user communities, with at least one victim being informed of their images being traded only after being anonymously reported.

Global Regulatory Crackdown: Six Jurisdictions Launch Investigations Simultaneously

This case is part of a broader, systematic review of Grok AI’s image safety issues worldwide:

Australia: Independent eSafety Commissioner Julie Inman Grant warned that Grok’s non-consensual sexualized image generation issues are worsening, with complaints doubling in recent months, some involving potential child exploitation material.

Ireland: The Data Protection Commission (DPC) has launched a formal investigation into X Internet Unlimited Company (XIUC), responsible for X’s EU operations, under Irish data protection law.

United States, European Union, United Kingdom, France: Concurrent investigations are underway, representing an unprecedented multi-jurisdictional regulatory pressure.

Frequently Asked Questions

How might this class-action lawsuit conflict with xAI’s potential “platform immunity” defense?

Alex Chandra, partner at IGNOS Legal Alliance, states that courts may not accept a simple platform defense. He notes that generative AI systems can be viewed as platforms at the user interaction level but should be considered products in safety assessments. Due to heightened child protection obligations in CSAM cases, “very strict review standards” will apply. Companies may need to present risk assessments and safety measures before deployment to demonstrate proactive prevention responsibilities.

What is the Martha’s Act, and why does it apply here?

The Martha’s Act is a U.S. federal law targeting child sexual abuse material, establishing strict civil and criminal liabilities for creating, distributing, and possessing CSAM, with minimum damages of $150,000 per violation. The key legal issue is whether xAI can be considered a “creator” of CSAM and whether its third-party licensing structure can exempt it from direct liability. Clarifying this legal boundary could have profound implications for accountability frameworks in AI-generated content industries.

What potential impact could this case have on the future development of AI image generation technology?

This is one of the first lawsuits directly holding AI companies accountable for generating identifiable minors in CSAM. If courts find that AI companies are directly responsible for misuse of their models, it will likely lead to significantly stricter safety verification standards before deployment, including mandatory red-team testing, content filtering mechanisms, and pre-set restrictions on high-risk outputs—fundamentally altering the commercialization and release processes of generative AI models.