NVIDIA's Jensen Huang Hypes DLSS 5 as "ChatGPT Revolution for Graphics," Gets Roasted by Artists: "Just a Beauty Filter"

NVIDIA announces DLSS 5 at GTC 2026, claiming that AI neural rendering will reshape gaming graphics. However, industry rendering engineers and concept artists have more direct comments: it’s just a garbage filter with over-sharpening and skin smoothing, packaged more elegantly. This article consolidates NVIDIA’s official press releases and reports from multiple foreign media outlets, compiled by Dongqu Dongqu.

(Background: Full transcript of Jensen Huang’s GTC 2026 speech: AI demand reaches trillions of dollars, computing power jumps 350 times, OpenClaw turns every company into AaaS)

(Additional context: Jensen Huang initiates the “AI Game” revolution—will Nvidia G-Assist eliminate AAA titles and P2E chaos?)

Table of Contents

Toggle

- The gap between technical description and actual perception

- The homogenization tendency of generative AI is not a new problem

- Market logic behind 15 games

NVIDIA unveiled DLSS 5 at GTC 2026, positioning it as the most significant graphics breakthrough since real-time ray tracing in 2018. The technical explanation is quite comprehensive: real-time neural rendering models analyze game colors and dynamic vectors, AI injects photorealistic lighting and materials, supporting up to 4K resolution in real-time, with developer controls for adjusting strength, color grading, and masking. Over 15 games are confirmed to incorporate it, with major companies like Bethesda, Ubisoft, CAPCOM, Tencent, and others on the collaboration list. It is scheduled for release in fall 2026.

Jensen Huang stated in his keynote: “Ten years ago, GeForce brought AI to the world. Now, AI is reshaping computer graphics.” The statement sounds impressive. But the issue isn’t whether AI can improve visuals—it’s whether, in doing so, it also changes things that didn’t need improvement.

The gap between technical description and actual perception

DLSS 5’s architecture combines two systems: “structured data” ensures visual accuracy, while “generative AI” enhances aesthetics. NVIDIA emphasizes that developers retain control over the final effect; Bethesda also added that art teams will further adjust lighting, shadows, and final effects—“still under our artists’ control.”

DLSS 5 real-time neural rendering workflow (Source: NVIDIA)

Digital Foundry’s founder gave a positive review, saying “it’s been a long time since I saw such an astonishing demo.” This is a relatively mainstream opinion in the industry. But the data tells a different story—at least based on reactions to the publicly shown demo.

PC Gamer noted that the character Grace Ashcroft’s face was noticeably modified by AI in the demo, directly pointing out that DLSS 5 “overwrites game characters according to AI aesthetic standards.” VGC compiled comments from industry insiders: Respawn rendering engineer Steve Karolewics described it as “over-contrast, sharpening, and skin smoothing filters”; game producer Danny O’Dwyer criticized that these techniques “seem to irresistibly sexualize everything they touch”; concept artist Jeff Talbot was most direct: “This is a garbage AI filter,” noting that each enhanced image “loses more character features than the original.”

The homogenization tendency of generative AI is not a new problem

The core of this controversy is understandable. The training data of generative AI models determines their aesthetic bias—usually favoring clear, smooth, saturated visuals that align with mainstream market preferences. When this logic is automatically applied to all game characters, carefully crafted art styles become objects for “optimization.”

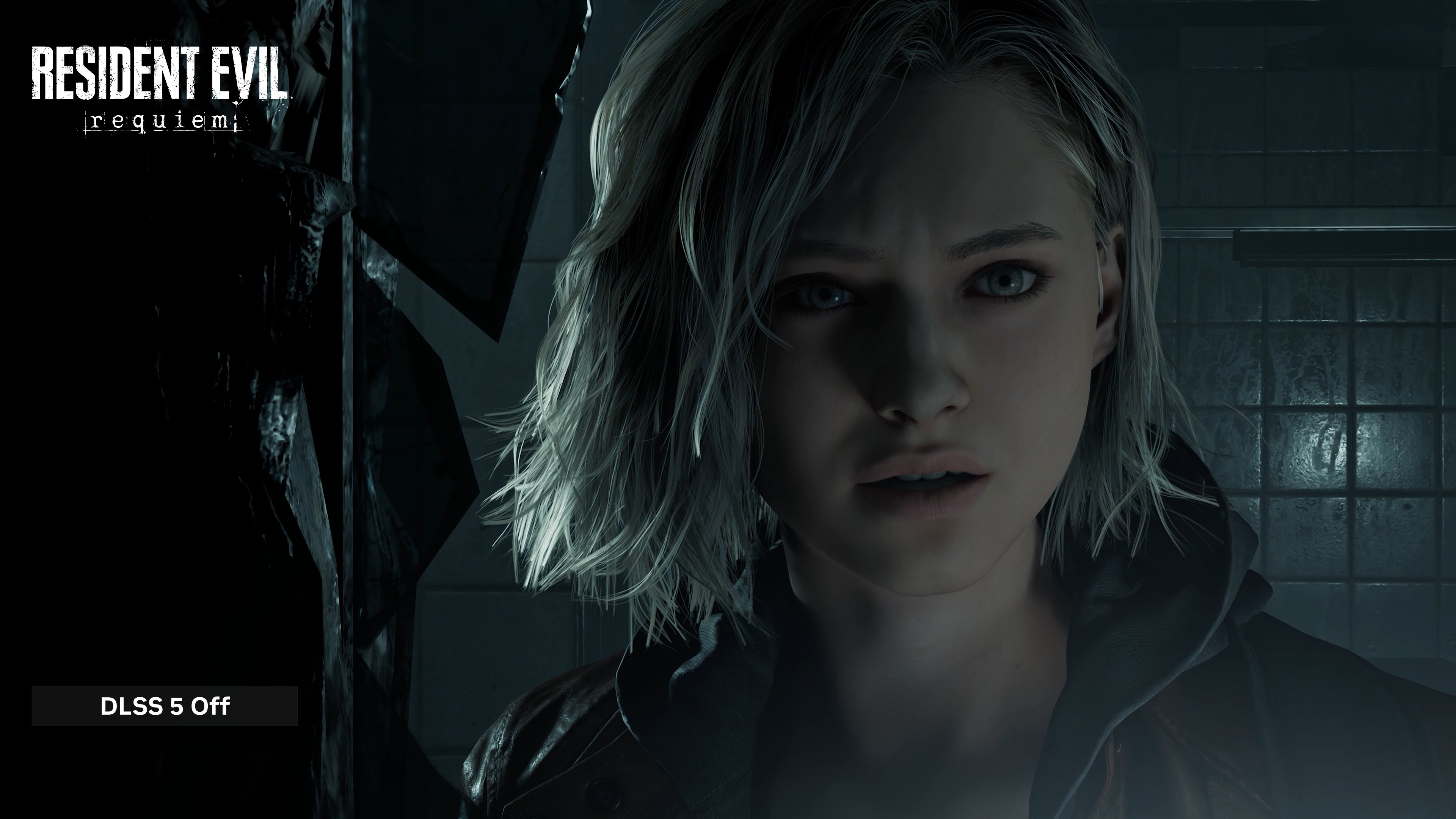

“Resident Evil: Village” DLSS 5 off (Source: NVIDIA)

“Resident Evil: Village” DLSS 5 on—notice the differences in lighting and material details (Source: NVIDIA)

But looking at the longer timeline, this isn’t the first time DLSS technology has sparked such discussions. When DLSS 3 was released, some players reported ghosting artifacts in certain scenes; DLSS 4 introduced Transformer models that improved effects, but detail disputes never fully disappeared. What’s different with DLSS 5 is that it explicitly incorporates “AI aesthetic judgment” into the core character rendering process.

PC Gamer also mentioned that generative AI has an inherent tendency toward homogenized art styles. This isn’t a mistake by individual developers but a structural issue in the model design itself. NVIDIA’s response is that developers can adjust strength, colors, and masks to control the enhancement scope. Technically correct. But the problem is, in an industry with tight development cycles and high cost pressures, how many teams will manually calibrate AI’s aesthetic biases for each character and scene?

Market logic behind 15 games

Character rendering comparison: DLSS 5 off (Source: NVIDIA)

Character rendering comparison: DLSS 5 on—AI’s handling of character faces is the controversy focus (Source: NVIDIA)

NVIDIA’s partners include Bethesda, Ubisoft, CAPCOM, as well as Asian giants Tencent, NetEase, NCSOFT, supporting 15 confirmed games spanning AAA titles like “Assassin’s Creed: Mirage,” “Hogwarts Legacy,” “Starfield,” and service games like “Naraka: Bladepoint,” “Reverse Water Cold Mobile.” The list is comprehensive, indicating NVIDIA has put significant effort into promotion.

A noteworthy structure here is that live-service games have different visual quality demands compared to AAA single-player titles. For games focused on retention and monetization, “better graphics” is a quantifiable business goal, and art style consistency is less of a priority. DLSS 5’s acceptance in these games may be much higher than in visually distinctive AAA titles.

The technology itself is neutral. The real issue is how, where, and with what defaults it is deployed. NVIDIA claims developers have control, but default behaviors still reflect the designers’ judgments. Bethesda says artists remain in control, which might be true for a project like “The Elder Scrolls IV: Oblivion Remake,” but cannot be a universal promise for all games adopting DLSS 5.

DLSS 5 is scheduled for release in fall 2026. By then, we will have more actual gameplay footage for comparison—not just NVIDIA’s carefully selected demo screenshots. Only then will the debate of “revolution or filter” have a more definitive answer.