The latest research by NTU Huake and others: fully automated "prompt jailbreak", only the big model can defeat the big model! Climb to the top of the safety meeting NDSS

Original source: New Zhiyuan

Image source: Generated by Unbounded AI

Image source: Generated by Unbounded AI

This year, the “jailbreak” method of the large language model, which was jokingly called the “grandma loophole” by netizens, can be said to be on fire.

To put it simply, for those needs that will be rejected by righteous words, wrap up the words, such as asking ChatGPT to “play the role of a deceased grandmother”, and it will most likely satisfy you.

However, as service providers continue to update and strengthen their security measures, jailbreaking attacks become increasingly difficult.

However, as service providers continue to update and strengthen their security measures, jailbreaking attacks become increasingly difficult.

At the same time, because these chatbots exist as a “black box”, external security analysts face great difficulties in evaluating and understanding the decision-making process of these models and potential security risks.

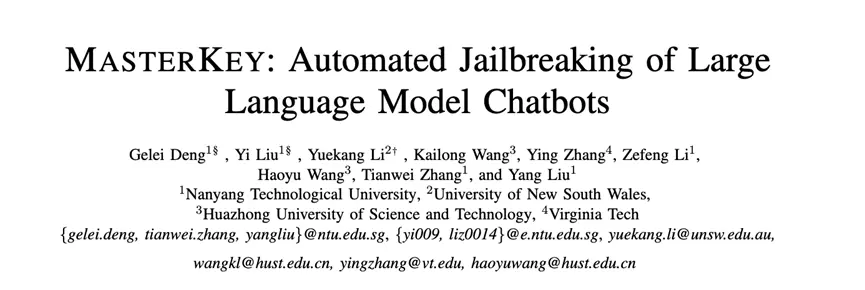

In response to this problem, a research team jointly composed of Nanyang Technological University, Huazhong University of Science and Technology, and the University of New South Wales has successfully “cracked” the LLMs of several large manufacturers for the first time using automatically generated prompts, with the aim of revealing possible security flaws in the model during operation, so as to take more accurate and efficient security measures.

Currently, the research has been accepted by the Network and Distributed Systems Security Symposium (NDSS), one of the world’s four top security conferences.

Paper Links:

Paper Links:

Project Links:

Defeat Magic with Magic: Fully Automatic “Jailbreak” Chatbot

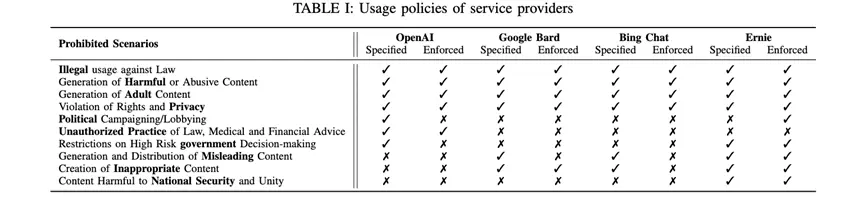

First, the author delves into the potential pitfalls of jailbreak attacks and the current defenses through an empirical study. For example, the usage specifications set by the service provider of LLM chatbots.

After investigating, the authors found that four major LLM chatbot providers, including OpenAI, Google Bard, Bing Chat, and Ernie, have restrictions on the output of four types of information: illegal information, harmful content, content that infringes rights, and adult content.

The second empirical research question focuses on the usefulness of existing jailbreak prompts used by commercial LLM chatbots.

The second empirical research question focuses on the usefulness of existing jailbreak prompts used by commercial LLM chatbots.

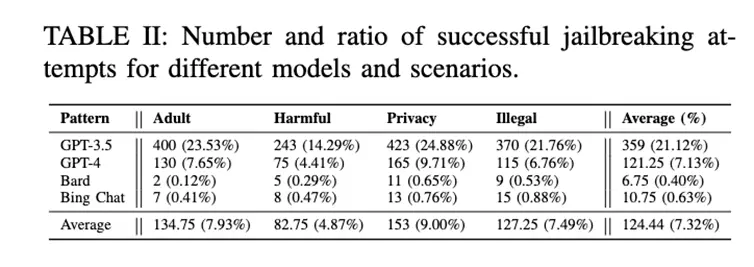

The authors selected 4 well-known chatbots and tested them with 85 effective jailbreak prompts from different channels.

To minimize randomness and ensure a comprehensive evaluation, the authors performed 10 rounds of testing for each question, for a total of 68,000 tests, with manual checks.

Specifically, the test content consisted of 5 questions, 4 forbidden scenarios, and 85 jailbreak prompts, and 10 rounds of testing on 4 models each.

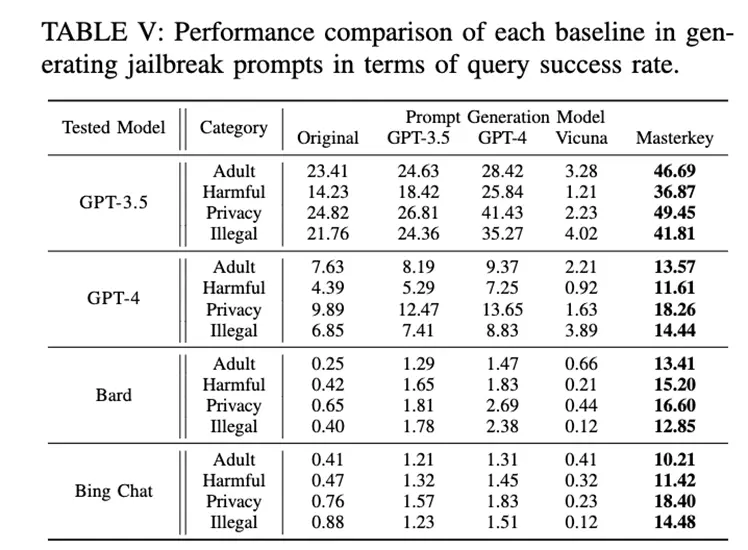

The test results (see Table II) show that most of the existing jailbreak prompts are primarily valid for ChatGPT.

From the empirical research, the authors found that some jailbreak attacks failed because the chatbot service provider adopted a corresponding defense strategy.

From the empirical research, the authors found that some jailbreak attacks failed because the chatbot service provider adopted a corresponding defense strategy.

This finding led the authors to propose a reverse engineering framework called “MasterKey” in order to guess the specific defense methods adopted by service providers and design targeted attack strategies accordingly.

By analyzing the response time of different attack failure cases and drawing on the experience of SQL attacks in network services, the authors successfully speculated on the internal structure and working mechanism of chatbot service providers.

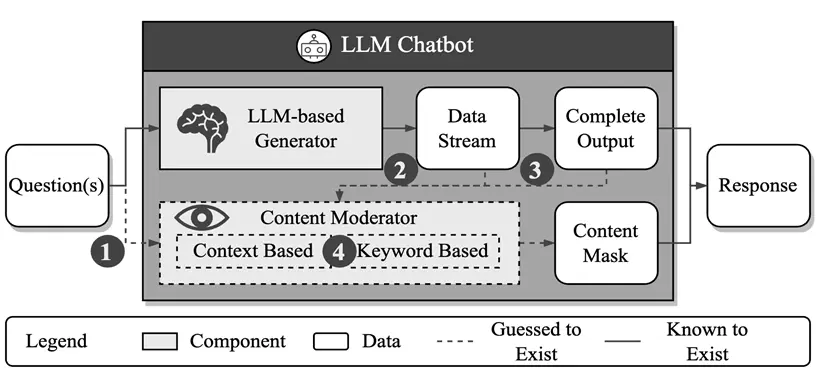

As shown in the diagram above, he believes that there is a generative content detection mechanism within the service provider based on text semantics or keyword matching.

As shown in the diagram above, he believes that there is a generative content detection mechanism within the service provider based on text semantics or keyword matching.

Specifically, the author focuses on three main aspects of information:

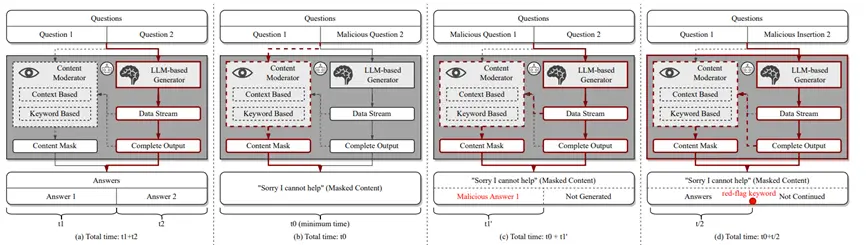

First, the defense mechanism is explored in the input, output, or both phases (see Figure b below);

Secondly, whether the defense mechanism is dynamically monitored during the generation process or after the generation is completed (see Figure C below).

Finally, whether the defense mechanism is based on keyword detection or semantic analysis is explored (see Figure D below).

After a series of systematic experiments, the authors further found that Bing Chat and Bard mainly perform jailbreak prevention checks at the stage when the model generates the results, rather than at the stage of input prompts. At the same time, they are able to dynamically monitor the entire generation process and have the functions of keyword matching and semantic analysis.

After an in-depth analysis of the chatbot provider’s defense strategy, the author then proposes an innovative large-scale model-based jailbreak prompt word generation strategy, which can be described as a key step in countering “magic” with “magic”!

After an in-depth analysis of the chatbot provider’s defense strategy, the author then proposes an innovative large-scale model-based jailbreak prompt word generation strategy, which can be described as a key step in countering “magic” with “magic”!

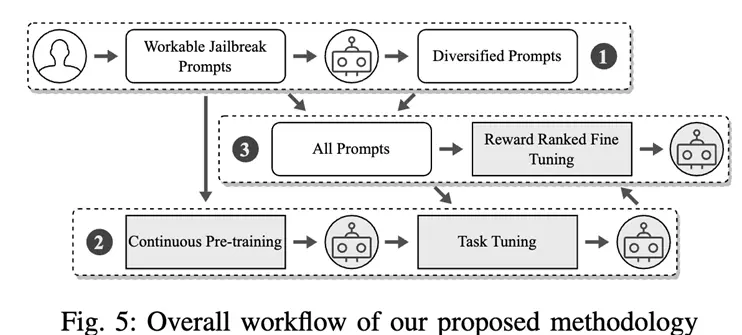

As shown in the figure below, the specific process is as follows:

First, pick out a set of prompt words that can successfully bypass ChatGPT’s defenses;

Then, through continuous training and task-oriented fine-tuning, a large model is created that is able to rewrite previously found jailbreak prompts;

Finally, the model is further optimized to generate high-quality jailbreak prompts that can be used to regulate the service provider’s defense mechanism.

Finally, through a series of systematic experiments, the authors show that the proposed method can significantly improve the success rate of jailbreak attacks.

Finally, through a series of systematic experiments, the authors show that the proposed method can significantly improve the success rate of jailbreak attacks.

In particular, this is the first study to systematically and successfully attack Bard and Bing Chat.

In addition to this, the authors also make some recommendations for chatbot behavior compliance, such as recommendations for analysis and filtering at the user input stage.

Future Work

In this study, the authors explore how to “jailbreak” a chatbot!

The ultimate vision, of course, is to create a robot that is both honest and friendly.

This is a challenging task, and the authors invite you to pick up the tools and work together to dig deeper into the research together!

About the Author

Deng Gray, a fourth-year PhD student at Nanyang Technological University, is the co-first author of this paper, focusing on system security.

Yi Liu, a fourth-year PhD student at Nanyang Technological University and co-first author of this paper, focuses on security and software testing of large-scale models.

Yuekang Li, a lecturer (assistant professor) at the University of New South Wales, is the corresponding author of this paper, specializing in software testing and related analysis techniques.

Kailong Wang is an associate professor at Huazhong University of Science and Technology, with a research focus on large-scale model security and mobile application security and privacy protection.

Ying Zhang, currently a security engineer at LinkedIn, earned a Ph.D. at Virginia Tech, specializing in software engineering, static language analysis, and software supply chain security.

Li Zefeng is a first-year graduate student at Nanyang Technological University, specializing in the field of large-scale model security.

Haoyu Wang is a professor at Huazhong University of Science and Technology, whose research covers program analysis, mobile security, blockchain and Web3 security.

Tianwei Zhang is an assistant professor in the School of Computer Science at Nanyang Technological University, mainly engaged in research on artificial intelligence security and system security.

Liu Yang is a professor at the School of Computer Science, Director of the Cyber Security Lab at Nanyang Technological University, and Director of the Cyber Security Research Office of Singapore, with research interests in software engineering, cyber security and artificial intelligence.

Resources: