Developer Guide for TEE

What is TEE? TEE security model? Common TEE vulnerabilities and their security best practices.

Original Title: “Securing TEE Apps: A Developer’s Guide”

Authors: prateek, roshan, siddhartha & linguine (Marlin), krane (Asula)

Compiled by: Shew, GodRealmX

Since Apple announced the launch of private cloud and NVIDIA provided confidential computing in GPUs, Trusted Execution Environments (TEEs) have become increasingly popular. Their confidentiality guarantees help protect user data (including private keys), while isolation ensures that the execution of programs deployed on them cannot be tampered with - whether by humans, other programs, or operating systems. Therefore, it is not surprising that TEEs are widely used in the Crypto x AI field to build products.

Like any new technology, TEE is going through an optimistic experimental phase. This article aims to provide developers and general readers with a basic conceptual guide to help them understand what TEE is, TEE’s security model, common vulnerabilities, and best practices for secure use of TEE. *(Note: To make the text easier to understand, we deliberately replaced TEE terms with simpler equivalent words).

What is TEE

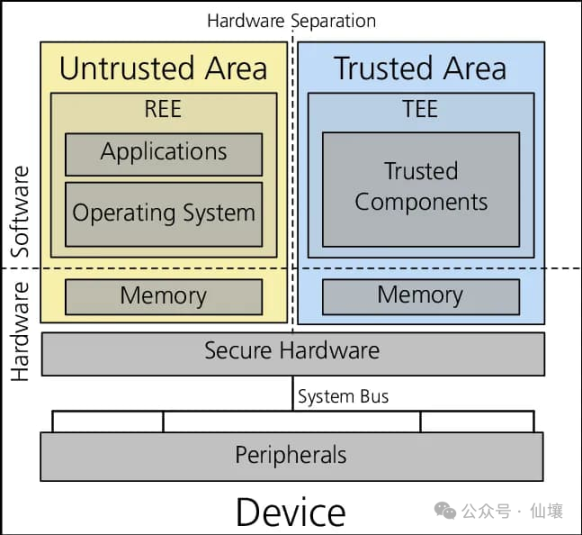

TEE is an isolated environment in a processor or data center, where programs can run without any interference from the rest of the system. To prevent interference from other parts, a series of designs are needed, mainly including strict access control, that is, controlling the access of other parts of the system to the programs and data inside the TEE. Currently, TEE is ubiquitous in mobile phones, servers, PCs, and cloud environments, making it very accessible and reasonably priced.

The above content may sound vague and abstract, in fact, different servers and cloud providers implement TEE in different ways, but the fundamental purpose is to prevent TEE from being interfered with by other programs.

Most readers may use biometric information to log in to devices, such as unlocking their phones with fingerprints. But how do we ensure that malicious applications, websites, or jailbroken operating systems cannot access and steal this biometric information? In fact, in addition to encrypting the data, the circuits in TEE devices simply do not allow any program to access the memory and processor areas occupied by sensitive data.

The hardware wallet is another example of TEE application scenarios. The hardware wallet is connected to the computer and communicates with it in a sandbox, but the computer cannot directly access the mnemonic stored in the hardware wallet’s memory. In both of the above cases, users trust that the device manufacturer can correctly design the chip and provide appropriate firmware updates to prevent the export or viewing of confidential data within the TEE.

Security Model

Unfortunately, there are many types of TEE implementations, and these different implementations (Intel SGX, Intel TDX, AMD SEV, AWS Nitro Enclaves, ARM TrustZone) require independent security model modeling and analysis. In the rest of this article, we will mainly discuss Intel SGX, TDX, and AWS Nitro, because these TEE systems have more users and complete and available development tools. These systems are also the most commonly used TEE systems in Web3.

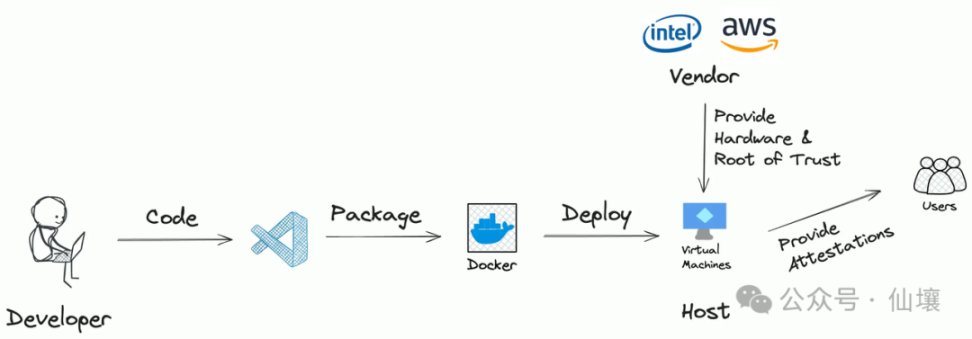

Generally speaking, the workflow of the application deployed in TEE is as follows:

- ‘Developers’ have written some code, which may or may not have been open sourced.

- Then, the developer packages the code into an Enclave Image File (EIF), which can run in TEE.

- EIF is hosted on a server with a TEE system. In some cases, developers can directly use a personal computer with TEE to host EIF to provide services externally.

- Users can interact with the application through predefined interfaces.

Clearly, there are three potential risks here:

- Developers: What is the purpose of preparing the EIF code? The EIF code may not conform to the business logic of the project party’s external publicity, and may steal users’ private data.

- Server: Is the TEE server running the expected EIF file? Or is the EIF really being executed inside the TEE? The server may also run other programs inside the TEE.

- Supplier: Is TEE’s design secure? Is there a backdoor that leaks all TEE’s data to the supplier?

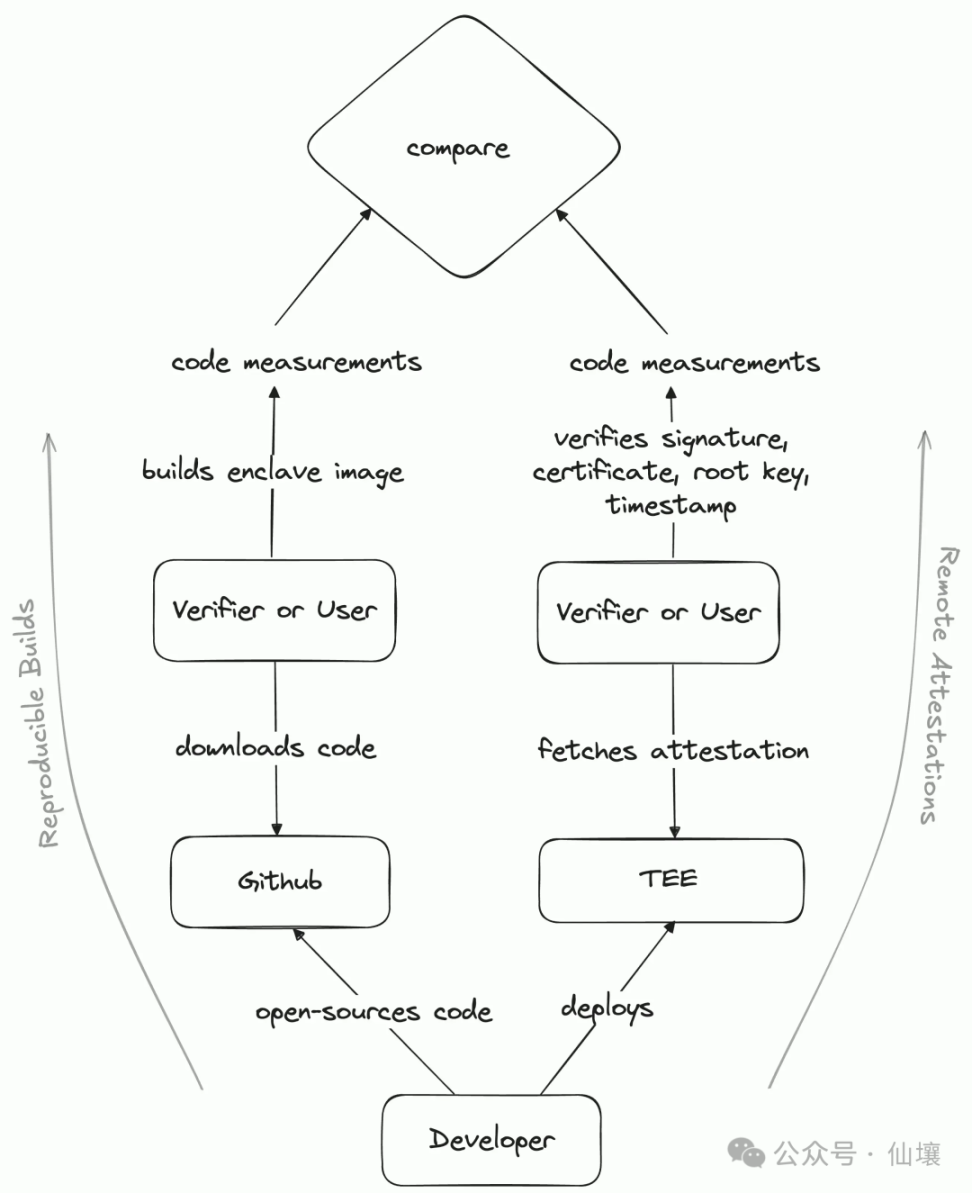

Fortunately, TEE now has a solution to eliminate the above risks, namely Reproducible Builds( and Remote Atteststations).

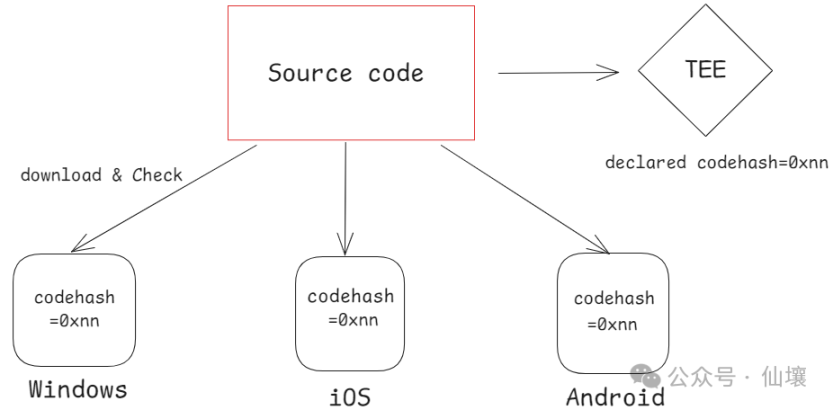

So what is reproducible build? Modern software development often requires importing a large number of dependencies, such as external tools, libraries, or frameworks, etc. These dependency files may also have hidden risks. Now npm and other solutions use the code hash corresponding to the dependency file as a unique identifier. When npm finds that a dependency file is inconsistent with the recorded hash value, it can be considered that the dependency file has been modified.

Reproducible builds can be considered as a set of standards, the goal of which is to obtain consistent hash values when any code runs on any device, as long as the build is performed according to a predetermined process. Of course, in practice, we can also use products other than hash values as identifiers, which we call code measurement (code measurement) here.

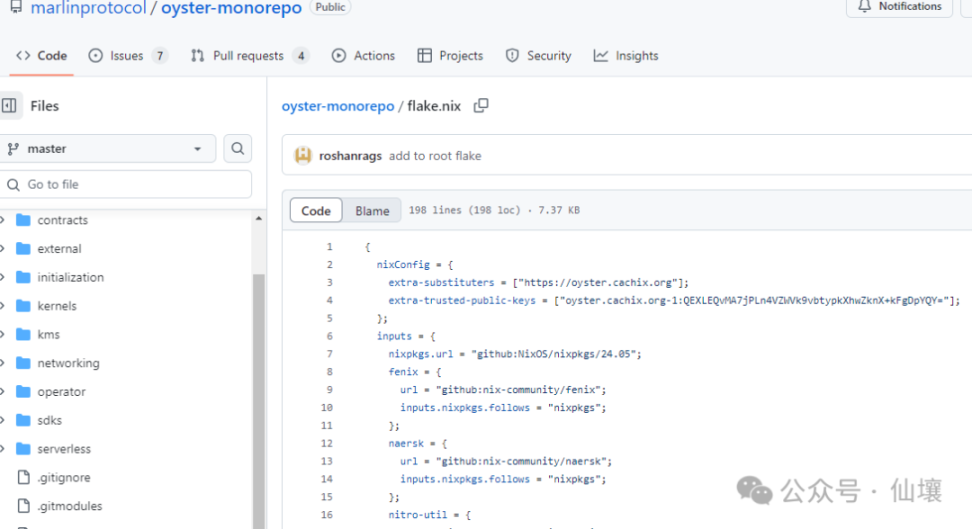

Nix is a commonly used tool for reproducible builds. After the program’s source code is made public, anyone can inspect the code to ensure that developers have not inserted any malicious content. Anyone can use Nix to build the code and check whether the resulting product has the same code measurement/hash as the one deployed by the project in the production environment. But how do we know the code measurement value of the program in the TEE? This involves the concept of ‘remote attestation’.

Remote attestation is a signed message from the TEE platform (trusted party) containing code measurement values, TEE platform versions, etc. Remote attestation allows external observers to know that a program is running in a secure location inaccessible to anyone (real TEE of version xx).

Reproducibility and remote attestation enable any user to know the actual code and TEE platform version information running inside the TEE, thereby preventing developers or servers from malicious activities.

However, in the case of TEE, it is always necessary to trust its suppliers. If the TEE supplier behaves maliciously, they can directly forge remote attestation. Therefore, if suppliers are considered as potential attack vectors, relying solely on TEE should be avoided, and it is preferable to combine them with ZK or consensus protocols.

The Charm of TEE

In our opinion, the TEE’s popularity, especially in terms of its deployment friendliness for AI Agents, is mainly due to the following factors:

- Performance: TEE can run LLM models with performance and cost similar to regular servers. However, zkML requires a lot of computational power to generate zk proofs for LLM.

- GPU Support: NVIDIA provides TEE computation support in its latest GPU series (Hopper, Blackwell, etc.)

- Accuracy: LLMs are non-deterministic; multiple inputs of the same prompt will yield different results. Therefore, multiple nodes (including observers attempting to create fraud proofs) may never reach a consensus on the operation of LLMs. In this scenario, we can trust that the LLM running in the TEE cannot be manipulated by malicious actors, and the program within the TEE always runs as intended, making the TEE more suitable for ensuring the reliability of LLM inference results than opML or consensus.

- Confidentiality: The data in the TEE is not visible to external programs. Therefore, the private keys generated or received in the TEE are always secure. This feature can be used to assure users that any message signed by this key comes from the internal programs of the TEE. Users can confidently host the private key in the TEE and set some signing conditions, and can confirm that the signature from the TEE meets the pre-set signing conditions.

- Networking: Programs running in the TEE can securely access the Internet through certain tools (without revealing queries or responses to servers running outside the TEE, while still providing guarantees for correct data retrieval to third parties). This is useful for retrieving information from third-party APIs and can be used to outsource computation to trusted but proprietary model providers.

- Write Access: Unlike the zk approach, code running in TEE can construct messages (whether tweets or transactions) and send them out via API and RPC networks.

- Developer-friendly: TEE-related frameworks and SDKs allow people to write code in any language and easily deploy programs into TEE, just like in a cloud server

Regardless of the pros and cons, it is currently difficult to find alternative solutions for many use cases that heavily rely on TEE. We believe that the introduction of TEE further expands the development space for on-chain applications, which may drive the emergence of new use cases.

TEE is not a silver bullet

Programs running in TEE are still susceptible to a series of attacks and errors. Just like smart contracts, they are prone to a series of issues. For simplicity, we classify potential vulnerabilities as follows:

- Developer Negligence

- Runtime Vulnerability

- Architectural design defects

- Operational issues

( Developer Negligence

Whether intentional or unintentional, developers can undermine the security guarantees of programs in TEE through intentional or unintentional code. This includes:

- **Opaque code: The security model of TEE relies on externally verifiable. The transparency of the code is crucial for external third-party verification.

- Code measurement has issues: Even if the code is public, if a third party does not rebuild the code and check the code measurement values in the remote proof, and then verify the code measurement provided in the remote proof. This is similar to receiving a zk proof but not verifying it.

- Unsafe Code: Even if you carefully generate and manage keys in TEE, the logic contained in the code may leak the keys inside the TEE during external calls. In addition, the code may contain backdoors or vulnerabilities. Compared to traditional backend development, it requires software development and auditing processes to meet high standards, similar to smart contract development.

- Supply Chain Attacks: Modern software development relies heavily on third-party code. Supply chain attacks pose a significant threat to the integrity of TEE.

) Runtime Vulnerability

Developers, no matter how cautious they are, can still become victims of runtime vulnerabilities. Developers must carefully consider whether any of the following will affect the security guarantees of their projects:

- Dynamic Code: It may not always be possible to keep all code transparent. Sometimes, the use case itself needs to dynamically execute opaque code loaded into the TEE at runtime. Such code is easily leaked or can break invariants, and must be carefully guarded against this situation.

- Dynamic Data: Most applications use external APIs and other data sources during execution. The security model extends to include these data sources, which are on par with oracles in DeFi. Incorrect or outdated data can lead to disasters. For example, in the use case of AI Agent, excessive reliance on LLM services, such as Claude.

- Insecure and unstable communication: TEE needs to run inside a server that contains TEE components. From a security perspective, **the server running TEE is actually a perfect man-in-the-middle (MitM) between TEE and external interactions. The server can not only eavesdrop on TEE’s external connections and view the content being sent, but also inspect specific IPs, restrict connections, and inject packets into connections in order to deceive one party into thinking it is coming from xx.

- For example, in TEE, the matching engine that can process encrypted transactions cannot provide a fair ordering guarantee (resistant to MEV) because routers/gateways/hosts can still discard, delay, or prioritize based on the packet’s source IP address.

Architectural Defects

The technology stack used by TEE applications should be handled with caution. The following issues may arise when building TEE applications:

- Applications with a larger attack surface: The attack surface of an application refers to the number of code modules that need to be completely secure. Code with a larger attack surface is very difficult to audit and may hide bugs or exploitable vulnerabilities. This often conflicts with the developer’s experience. For example, compared to TEE programs that do not rely on Docker, TEE programs that rely on Docker have a much larger attack surface. Enclaves that rely on mature operating systems have a larger attack surface compared to TEE programs that use the lightest operating systems.

- Portability and Liveness: In Web3, applications must be resistant to censorship. Anyone can launch a TEE and take over inactive system participants, making the applications within the TEE portable. The biggest challenge here is the portability of the keys. Some TEE systems have key derivation mechanisms, but once the key derivation mechanism within the TEE is used, other servers cannot generate keys within the external TEE program locally. This limits TEE programs to typically being restricted to the same machine, which is not sufficient for maintaining portability.

- Unsafe Trust Anchor: For example, when running an AI Agent in TEE, how to verify if a given address belongs to that Agent? If not carefully designed, the true trust anchor could be an external third party or a key management platform, rather than the TEE itself.

Operational Issues

Last but not least, there are some practical considerations about how to actually operate a server that runs TEE programs:

- Unsafe Platform Versions: TEE platforms occasionally receive security updates, which are reflected as platform versions in remote attestation. If your TEE is not running on a secure platform version, hackers can steal keys from the TEE using known attack vectors. Even worse, your TEE may be running on a secure platform version today, but tomorrow it may become unsafe.

- No Physical Security: Despite your best efforts, TEE may be susceptible to side-channel attacks, which typically require physical access and control of the server where TEE is located. Therefore, physical security is an important layer of defense in depth. A related concept is cloud attestation, where you can prove that TEE is running in a cloud data center with physical security assurance.

Building Secure TEE Programs

We divide our recommendations into the following points:

- The safest solution

- Necessary preventive measures taken

- Advice based on use cases

1. The safest solution: no external dependencies

Creating highly secure applications may involve eliminating external dependencies, such as external inputs, APIs, or services, to reduce the attack surface. This approach ensures that the application runs independently without external interactions that could compromise its integrity or security. While this strategy may limit the diversity of the program’s functionality, it can provide a very high level of security.

If the model is running locally, this level of security can be achieved for most CryptoxAI use cases.

2. Necessary preventive measures taken

Regardless of whether the application has external dependencies, the following content is required!

Consider TEE applications as smart contracts, not backend applications; maintain a lower update frequency, and strict testing.

Building a TEE program should be as rigorous as writing, testing, and updating a smart contract. Like smart contracts, TEEs operate in a highly sensitive and immutable environment, where erroneous or unexpected behavior can lead to serious consequences, including a complete loss of funds. Thorough audits, extensive testing, and a minimum, carefully audited update are essential to ensure the integrity and reliability of TEE-based applications.

Audit code and check build pipeline

The security of an application depends not only on the code itself, but also on the tools used in the build process. A secure build pipeline is essential to prevent breaches. The TEE only guarantees that the code provided will work as intended, but cannot fix defects introduced during the build process.

To reduce risk, code must be rigorously tested and audited to eliminate errors and prevent unnecessary information leakage. In addition, repeatable builds play a crucial role, especially when the code is developed by one party and used by another. Reproducible builds allow anyone to verify that the programs executed within the TEE match the original source code, ensuring transparency and trust. Without a repeatable build, it is nearly impossible to determine the exact content of the executable program within the TEE, compromising the security of the application. **

For example, the source code for DeepWorm (a project that runs a worm brain simulation model in a TEE) is completely open source. The executors within the TEE are built reproducibly using Nix pipelines.

Use audited or verified libraries

When handling sensitive data in TEE programs, only use audited libraries for key management and private data processing. Unaudited libraries may expose keys and compromise the security of the application. Prioritize thoroughly reviewed, security-focused dependencies to maintain the confidentiality and integrity of the data.

Always verify proof from TEE

Users interacting with TEE must verify the remote attestation or verification mechanism generated by TEE to ensure secure and trustworthy interaction. Without these checks, the server may manipulate the response, making it impossible to distinguish between genuine TEE output and tampered data. Remote attestation provides critical evidence for the code library and configuration running in TEE, based on which we can determine whether the program running inside TEE is consistent with our expectations.

Specific attestations can be verified on-chain (IntelSGX, AWSNitro), off-chain using ZK proofs (IntelSGX, AWSNitro), or by users themselves or by managed services such as t16z or MarlinHub.

3. Recommendations that depend on the use case

According to the target use case and structure of the application, the following tips may help make your application more secure.

Ensure that user interactions with TEE are always performed over a secure channel

The server where the TEE is located is essentially untrusted. The server can intercept and modify communications. In some cases, it may be acceptable for the server to read data without modifying it, while in other cases, even reading data may be unacceptable. To mitigate these risks, it is essential to establish a secure end-to-end encrypted channel between the user and the TEE. At a minimum, please ensure that the message contains a signature to verify its authenticity and source. In addition, users need to always check that the TEE provides remote proof to verify that they are communicating with the correct TEE. This ensures the integrity and confidentiality of the communication.

For example, Oyster is able to support secure TLS issuance through the use of CAA records and RFC8657. In addition, it provides a TEE native TLS protocol called Scallop, which does not rely on WebPKI.

Know that TEE memory is transient

TEE memory is transient, which means that when TEE is turned off, its contents (including encryption keys) will be lost. Without a secure mechanism to save this information, critical data may become permanently inaccessible, potentially causing financial or operational difficulties.

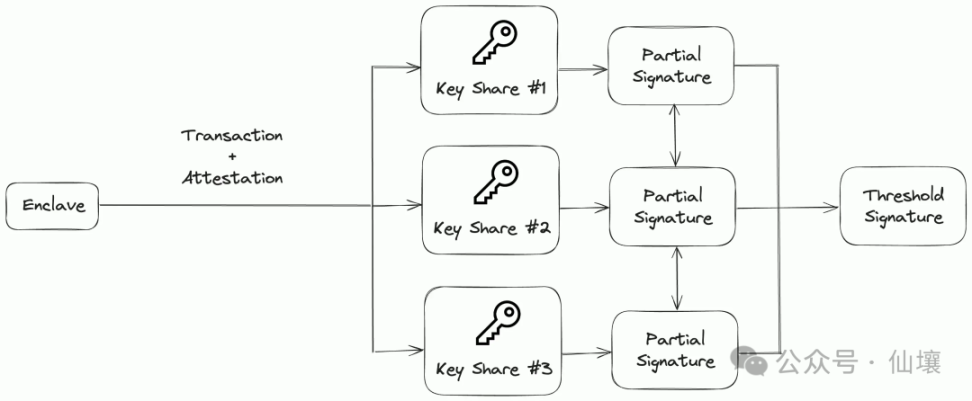

A multi-party computation (MPC) network with decentralized storage systems such as IPFS can be used as a solution to this problem. The MPC network splits the key into multiple nodes, ensuring that no single node holds the complete key while allowing the network to rebuild the key when needed. Data encrypted with this key can be securely stored on IPFS.

If necessary, the MPC network can provide keys to a new TEE server running the same image, provided certain conditions are met. This approach ensures flexibility and strong security, maintaining data accessibility and confidentiality even in untrusted environments.

![]###https://img.gateio.im/social/moments-db0051b56114c59f585033373a8ab946###

There is another solution,** that is, the TEE hands over the relevant transactions to different MPC servers, and the MPC servers sign and aggregate the signatures and finally put the transactions on the chain. This approach is much less flexible and can’t be used to hold API keys, passwords, or arbitrary data (there is no trusted third-party storage service). **

Reduced Attack Surface

For security-critical use cases, it’s worth trying to reduce perimeter dependencies as much as possible at the expense of the developer experience. For example, Dstack comes with a minimal Yocto-based kernel that contains only the modules that Dstack needs to work. It might even be worth using an older technology like SGX (over TDX) because it doesn’t require a bootloader or operating system to be part of the TEE.

Physical Isolation

By physically isolating TEE from possible human intervention, the security of TEE can be further enhanced. Although we can believe that data centers can provide physical security by hosting TEE servers in data centers and cloud providers, projects like Spacecoin are exploring a rather interesting alternative—space. The SpaceTEE paper relies on security measures, such as measuring the inertia after launch, to verify whether the satellite deviates from the expected process when entering orbit.

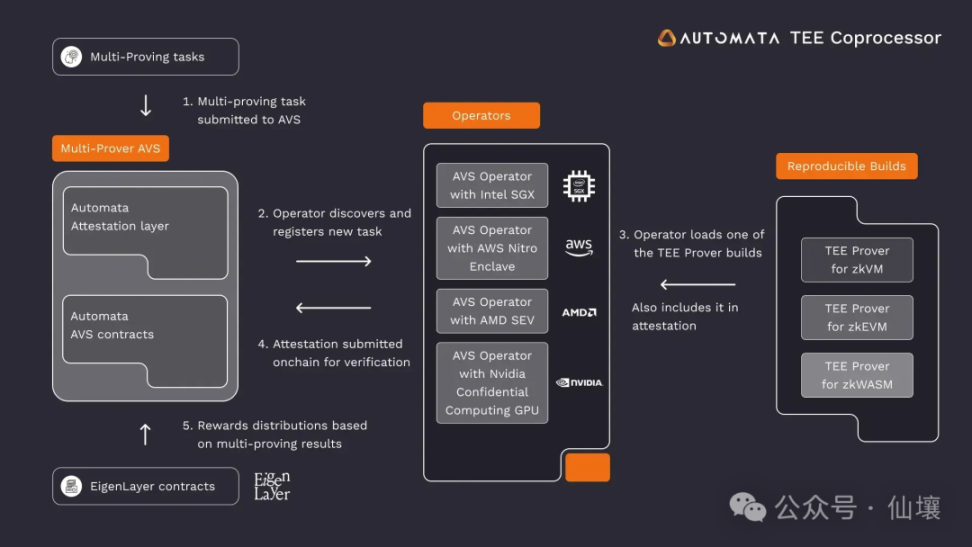

Masternodes

Just as Ethereum relies on multiple client implementations to reduce the risk of bugs that affect the entire network, multiprovers use different TEE implementations to improve security and resiliency. By running the same computational steps across multiple TEE platforms, multi-factor validation ensures that vulnerabilities in one TEE implementation do not compromise the entire application. While this approach requires the computational process to be deterministic, or to define consensus between different TEE implementations in non-deterministic cases, it also offers significant benefits such as fault isolation, redundancy, and cross-validation, making it a good choice for applications that require reliability guarantees.

Looking to the Future

TEE has obviously become a very exciting field. As mentioned earlier, the ubiquity of AI and its continuous access to user sensitive data means that large tech companies like Apple and NVIDIA are using TEE in their products and offering it as part of their products.

On the other hand, the crypto community has always been very focused on security. As developers attempt to extend on-chain applications and use cases, we have seen TEE become popular as a solution that offers the right trade-off between functionality and trust assumptions. While TEE is not as trust-minimizing as a complete ZK solution, we expect TEE to become the approach through which Web3 companies and large tech companies gradually integrate their products.